csv2vcs - A simple conversion tool for generation of vCalender data

csv2vcs enable you to convert comma separated calender data in a number of different formats to a vCalender formatted output data to be used in e.g. Outlook or Palm Desktop.

It is very simple to use the tool. Input data can be prepared in a simple text editor. One line preceeding calender data configures how the following data shall be interpreted by the tool.

The tool tcl script based which requires that tcl/tk to be installed in order to run.

Download

csv2vcs.tcl - Tool script

test.csv - Test input file

Usage

- Download csv2vcs.tcl to an suitable folder.

- Check that tcl is installed by running tclsh from command prompt.

# tclsh - Print help for tool i.e. run tool with no parameters.

# tclsh csv2vcs.tcl - Download test input file test.csv to a suitable folder.

- Run tool to convert input file to vCalender format. If files are not in current folder path information need to be included.

# tclsh csv2vcs.tcl test.csv test.vcs - Try to import the test.vcs to your calender application e.g. Palm Desktop.

Continue to explore the tool by generating your own input files data. Good luck !

ClipboardBuffer - Manages multiple clips

ClipboardBuffer is able to store several textual clips in a list. Clips can simply be added to the list and retrieved again whenever its needed. Examples of usages.

- Store temprary url's from the browse.

- Login names and passwords. Be careful with the security, though.

- Your address information that have to edit several times.

- Things to remember.

The tool requires java 1.4 or later. Get it from http://www.java.com.

Download ClipboardBuffer.zip - ClipboardBuffer application.

Usage

- Download ClipboardBuffer.zip

- Extraxt files to root of install folder e.g.

C:\Program Files\ - Read README.txt file for further instructions

- The application can be run by double clicking the .jar file. But in this case there will be no persistance enabled.

Hope you will have use of this tool !

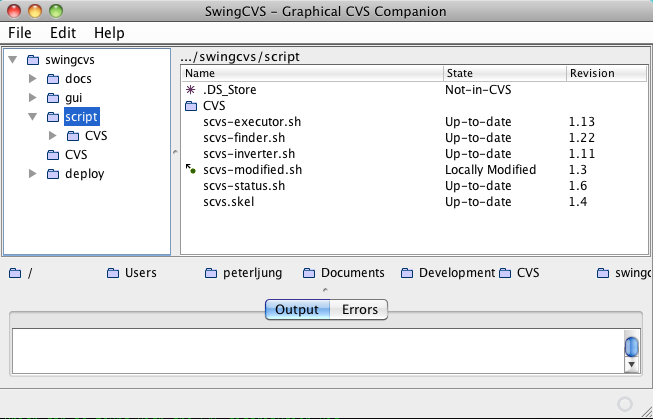

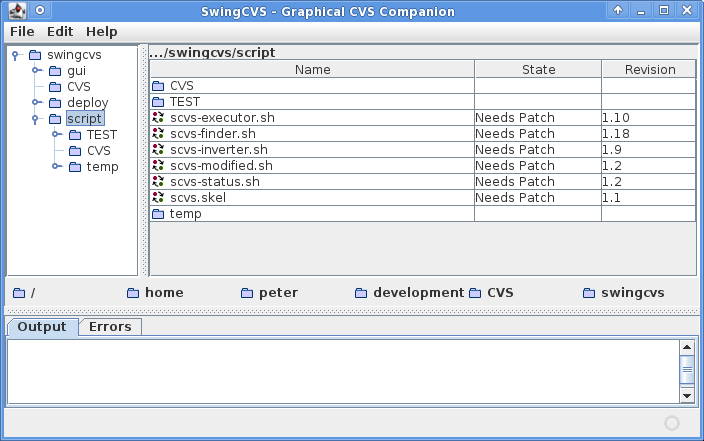

SwingCVS - Graphical CVS Companion

Screenshot on MacOS Snow Leopard running JDK 1.6.

Screenshot on OpenBSD 4.6 running XCFE and JDK 1.7.

Nifty little companion to cvs on command line.

Download

The deployment package is self-explanatory. Just follow README.TXT in the compressed package.

Install instruction (from README.TXT)

Prequisities

- bash installed (check with

bash --version) - cvs installed (check with 'cvs -v') (See CVSTips for some information regarding OpenBSD)

- java 1.5 (or later) installed (check with 'java -version')

Installation

- Unpack this

.tgzfile - Goto

scvsfolder - Run

install.sh - Run

scvs.sh [-p ROOTFOLDER]

Example ...

cd /preferred/folder

tar xzf scvs.tgz

cd scvs

./install.sh

./scvs.sh

Help

Run ./scvs -h for help.

Rxl - Command Line Spreadsheet

Rxl is a minimalistic but somewhat useful spreadsheet program written in Ruby. This application was started during a flight as a way to make the time fly as fast as the aeroplane. It did, until my laptop was out of power. As usual I hugely underestimate the effort to make something (even this small) useful. Most effort has been put into parsing input data. A full parser may have been more suitable considering the time spent on regular expressions.

Current version is working but could of course be improved a lot. Download/extract rxl.tgz. Then run rxl.sh.

Installation and start up ...

$ ruby -v

ruby 1.8.7 (2009-06-12 patchlevel 174) [universal-darwin10.0]

$ tar xzf rxl.tgz

$ cd rxl

$ ./rxl.rb

$ ./rxl.rb

rxl>> help

rxl - ruby excel replacement on the command line

Commands:

h - show help

<A..Z><0..N> - cell (column, row)

s - show evaluated sheet

e - show entered sheet

e <cell> <data> - enter cell data

<data>,<data>,...<enter> add data for row and edit in next row

<data>;<data>;...<enter> add data for col and edit in next col

Cell fomatting:

"string"

1234, 0x1220 - numbers

<a1> - cell reference

<a1>-<a3> - row/column range

<a1>,<a2>, ... - cell set

Cell expressions:

+,-,*,/,** - evaluated in ruby priority order

<expr> ? <true_expr> : <false_expr>

sum(<cells>), mean(<cells>), var(<cells>), min(<cells>), max(<cells>)

Math::E, Math::PI - constants

1.0, 1234, 0x1220 - numbers

"text"

any other Ruby expression

Future:

- Add ReadLine support (http://bogojoker.com/readline/)

...

A sample data session ...

$ ./rxl.rb

rxl>> e a1 1,2

rxl>> e a2 2,2

rxl>> s

1, 2,

2, 2,

rxl>> e a3 sum(<a1>,<a2>),sum(<b1>,<b2>)

rxl>> s

1, 2,

2, 2,

3, 4,

rxl>> e c1 sum(<a1>,<b1>);sum(<a2>,<b2>);<c1>+<c2>

rxl>> s

1, 2, 3,

2, 2, 4,

3, 4, 7,

rxl>> s

1, 2, 3,

2, 2, 4,

3, 4, 7,

rxl>> q

$

Hope you will find Rxl amusing.